Please note: our followup analysis of 3.4.0-rc3 revealed additional faults in MongoDB’s replication algorithms which could lead to the loss of acknowledged documents–even with Majority Write Concern, journaling, and fsynced writes.

In May of 2013, we showed that MongoDB 2.4.3 would lose acknowledged writes at all consistency levels. Every write concern less than MAJORITY loses data by design due to rollbacks–but even WriteConcern.MAJORITY lost acknowledged writes, because when the server encountered a network error, it returned a successful, not a failed, response to the client. Happily, that bug was fixed a few releases later.

Since then I’ve improved Jepsen significantly and written a more powerful analyzer for checking whether or not a system is linearizable. I’d like to return to Mongo, now at version 2.6.7, to verify its single-document consistency. (Mongo 3.0 was released during my testing, and I expect they’ll be hammering out single-node data loss bugs for a little while.)

In this post, we’ll see that Mongo’s consistency model is broken by design: not only can “strictly consistent” reads see stale versions of documents, but they can also return garbage data from writes that never should have occurred. The former is (as far as I know) a new result which runs contrary to all of Mongo’s consistency documentation. The latter has been a documented issue in Mongo for some time. We’ll also touch on a result from the previous Jepsen post: almost all write concern levels allow data loss.

This analysis is brought to you by Stripe, where I now work on safety and data integrity–including Jepsen–full time. I’m delighted to work here and excited to talk about new consistency problems!

We’ll start with some background, methods, and an in-depth analysis of an example failure case, but if you’re in a hurry, you can skip ahead to the discussion.

Write consistency

First, we need to understand what MongoDB claims to offer. The Fundamentals FAQ says Mongo offers “atomic writes on a per-document-level”. From the last Jepsen post and the write concern documentation, we know Mongo’s default consistency level (Acknowledged) is unsafe. Why? Because operations are only guaranteed to be durable after they have been acknowledged by a majority of nodes. We must must use write concern Majority to ensure that successful operations won’t be discarded by a rollback later.

Why can’t we use a lower write concern? Because rollbacks are only OK if any two versions of the document can be merged associatively, commutatively, and idempotently; e.g. they form a CRDT. Our documents likely don’t have this property.

For instance, consider an increment-only counter, stored as a single integer field in a MongoDB document. If you encounter a rollback and find two copies of the document with the values 5 and 7, the correct value of the counter depends on when they diverged. If the initial value was 0, we could have had five increments on one primary, and seven increments on another: the correct value is 5 + 7 = 12. If, on the other hand, the value on both replicas was 5, and only two inserts occurred on an isolated primary, the correct value should be 7. Or it could be any value in between!

And this assumes your operations (e.g. incrementing) commute! If they’re order-dependent, Mongo will allow flat-out invalid writes to go through, like claiming the same username twice, document ID conflicts, transferring $40 + $30 = $70 out of a $50 account which is never supposed to go negative, and so on.

Unless your documents are state-based CRDTs, the following table illustrates which Mongo write concern levels are actually safe:

| Write concern | Also called | Safe? |

|---|---|---|

| Unacknowledged | NORMAL | Unsafe: Doesn't even bother checking for errors |

| Acknowledged (new default) | SAFE | Unsafe: not even on disk or replicated |

| Journaled | JOURNAL_SAFE | Unsafe: ops could be illegal or just rolled back by another primary |

| Fsynced | FSYNC_SAFE | Unsafe: ditto, constraint violations & rollbacks |

| Replica Acknowledged | REPLICAS_SAFE | Unsafe: ditto, another primary might overrule |

| Majority | MAJORITY | Safe: no rollbacks (but check the fsync/journal fields) |

So: if you use MongoDB, you should almost always be using the Majority write concern. Anything less is asking for data corruption or loss when a primary transition occurs.

Read consistency

The FAQ Fundamentals doesn’t just promise atomic writes, though: it also claims “fully-consistent reads”. The Replication Introduction goes on:

The primary accepts all write operations from clients. Replica set can have only one primary. Because only one member can accept write operations, replica sets provide strict consistency for all reads from the primary.

What does “strict consistency” mean? Mongo’s glossary defines it as

A property of a distributed system requiring that all members always reflect the latest changes to the system. In a database system, this means that any system that can provide data must reflect the latest writes at all times. In MongoDB, reads from a primary have strict consistency; reads from secondary members have eventual consistency.

The Replication docs agree: “By default, in MongoDB, read operations to a replica set return results from the primary and are consistent with the last write operation”, as distinct from “eventual consistency”, where “the secondary member’s state will eventually reflect the primary’s state.”

Read consistency is controlled by the Read Preference, which emphasizes that reads from the primary will see the “latest version”:

By default, an application directs its read operations to the primary member in a replica set. Reading from the primary guarantees that read operations reflect the latest version of a document.

And goes on to warn that:

…. modes other than primary can and will return stale data because the secondary queries will not include the most recent write operations to the replica set’s primary.

All read preference modes except primary may return stale data because secondaries replicate operations from the primary with some delay. Ensure that your application can tolerate stale data if you choose to use a non-primary mode.

So, if we write at write concern Majority, and read with read preference Primary (the default), we should see the most recently written value.

CaS rules everything around me

When writes and reads are concurrent, what does “most recent” mean? Which states are we guaranteed to see? What could we see?

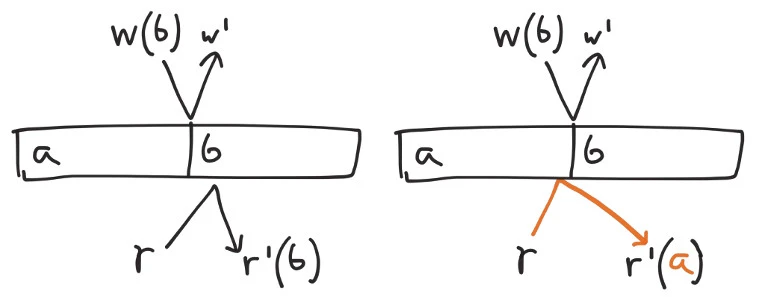

For concurrent operations, the absence of synchronized clocks prevents us from establishing a total order. We must allow each operation to come just before, or just after, any other in-flight writes. If we write a, initiate a write of b, then perform a read, we could see either a or b depending on which operation takes place first.

On the other hand, we obviously shouldn’t interact with a value from the future–we have no way to tell what those operations will be. The latest possible state an operation can see is the one just prior to the response received by the client.

And because we need to see the “most recent write operation”, we should not be able to interact with any state prior to the ops concurrent with the most recently acknowledged write. It should be impossible, for example, to write a, write b, then read a: since the writes of a and b are not concurrent, the second should always win.

This is a common strong consistency model for concurrent data structures called linearizability. In a nutshell, every successful operation must appear to occur atomically at some time between its invocation and response.

So in Jepsen, we’ll model a MongoDB document as a linearizable compare-and-set (CaS) register, supporting three operations:

write(x'): set the register’s value tox'read(x): read the current valuex. Only succeeds if the current value is actuallyx.cas(x, x'): if and only if the value is currentlyx, set it tox'.

We can express this consistency model as a singlethreaded datatype in Clojure. Given a register containing a value, and an operation op, the step function returns the new state of the register–or a special inconsistent value if the operation couldn’t take place.

(defrecord CASRegister [value]

Model

(step [r op]

(condp = (:f op)

:write (CASRegister. (:value op))

:cas (let [[cur new] (:value op)]

(if (= cur value)

(CASRegister. new)

(inconsistent (str "can't CAS " value " from " cur

" to " new))))

:read (if (or (nil? (:value op))

(= value (:value op)))

r

(inconsistent (str "can't read " (:value op)

" from register " value))))))

Then we’ll have Jepsen generate a mix of read, write, and CaS operations, and apply those operations to a five-node Mongo cluster. Over the course of a few minutes we’ll have five clients perform those random read, write, and CaS ops against the cluster, while a special nemesis process creates and resolves network partitions to induce cluster transitions.

Finally, we’ll have Knossos analyze the resulting concurrent history of all clients’ operations, in search of a linearizable path through the history.

Inconsistent reads

Surprise! Even when all writes and CaS ops use the Majority write concern, and all reads use the Primary read preference, operations on a single document in MongoDB are not linearizable. Reads taking place just after the start of a network partition demonstrate impossible behaviors.

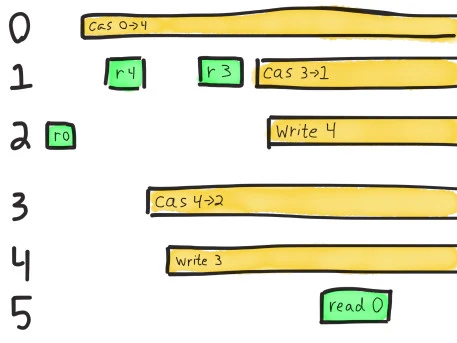

In this history, an anomaly appears shortly after the nemesis isolates nodes n1 and n3 from n2, n4, and n5. Each line shows a singlethreaded process (e.g. 2) performing (e.g. :invoke) an operation (e.g. :read), with a value (e.g. 3).

An :invoke indicates the start of an operation. If it completes successfully, the process logs a corresponding :ok. If it fails (by which we mean the operation definitely did not take place) we log :fail instead. If the operation crashes–for instance, if the network drops, a machine crashes, a timeout occurs, etc.–we log an :info message, and that operation remains concurrent with every subsequent op in the history. Crashed operations could take effect at any future time.

Not linearizable. Linearizable prefix was:

2 :invoke :read 3

4 :invoke :write 3

...

4 :invoke :read 0

4 :ok :read 0

:nemesis :info :start "Cut off {:n5 #{:n3 :n1},

:n2 #{:n3 :n1},

:n4 #{:n3 :n1},

:n1 #{:n4 :n2 :n5},

:n3 #{:n4 :n2 :n5}}"

1 :invoke :cas [1 4]

1 :fail :cas [1 4]

3 :invoke :cas [4 4]

3 :fail :cas [4 4]

2 :invoke :cas [1 0]

2 :fail :cas [1 0]

0 :invoke :read 0

0 :ok :read 0

4 :invoke :read 0

4 :ok :read 0

1 :invoke :read 0

1 :ok :read 0

3 :invoke :cas [2 1]

3 :fail :cas [2 1]

2 :invoke :read 0

2 :ok :read 0

0 :invoke :cas [0 4]

4 :invoke :cas [2 3]

4 :fail :cas [2 3]

1 :invoke :read 4

1 :ok :read 4

3 :invoke :cas [4 2]

2 :invoke :cas [1 1]

2 :fail :cas [1 1]

4 :invoke :write 3

1 :invoke :read 3

1 :ok :read 3

2 :invoke :cas [4 2]

2 :fail :cas [4 2]

1 :invoke :cas [3 1]

2 :invoke :write 4

0 :info :cas :network-error

2 :info :write :network-error

3 :info :cas :network-error

4 :info :write :network-error

1 :info :cas :network-error

5 :invoke :write 1

5 :fail :write 1

... more failing ops which we can ignore since they didn't take place ...

5 :invoke :read 0

Followed by inconsistent operation:

5 :ok :read 0

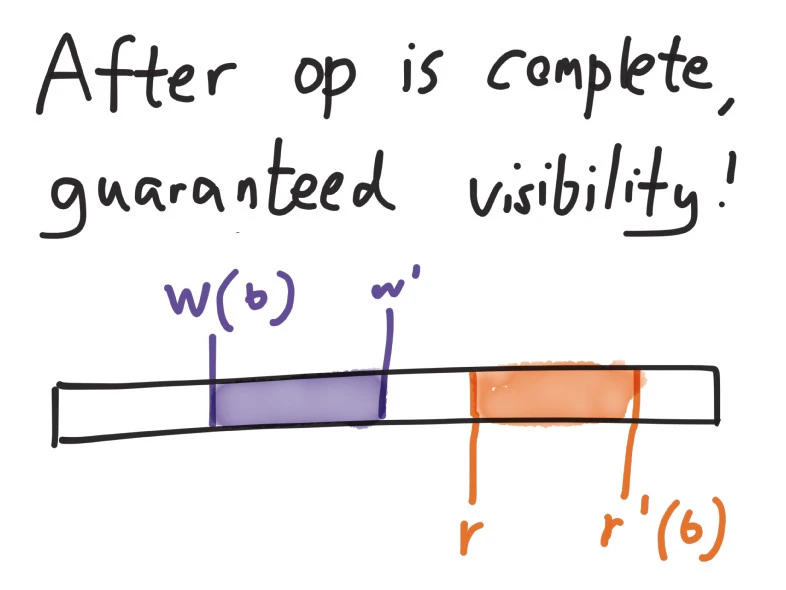

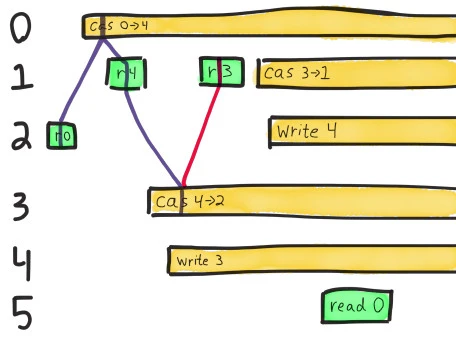

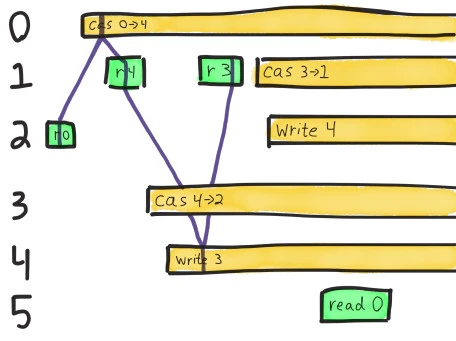

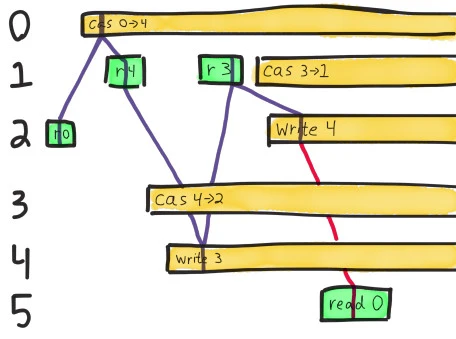

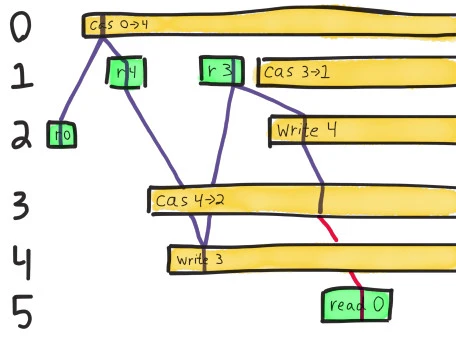

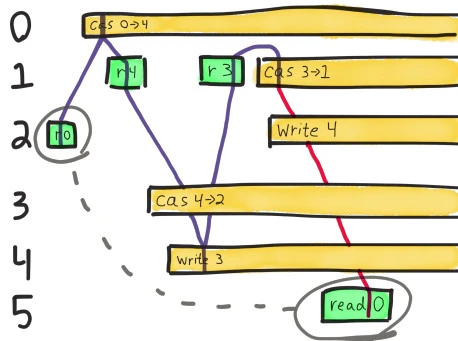

This is a little easier to understand if we sketch a diagram of the last few operations–the events occurring just after the network partition begins. In this notation, time moves from left to right, and each process is a horizontal track. A green bar shows the interval between :invoke and :ok for a successful operation. Processes that crashed with :info are shown as yellow bars running to infinity–they’re concurrent with all future operations.

In order for this history to be linearizable, we need to find a path which always moves forward in time, touches each OK (green) operation once, and may touch crashed (yellow) operations at most once. Along that path, the rules we described for a CaS register should hold–writes set the value, reads must see the current value, and compare-and-set ops set a new value iff the current value matches.

The very first operation here is process 2’s read of the value 0. We know the value must be zero when it completes, because there are no other concurrent ops.

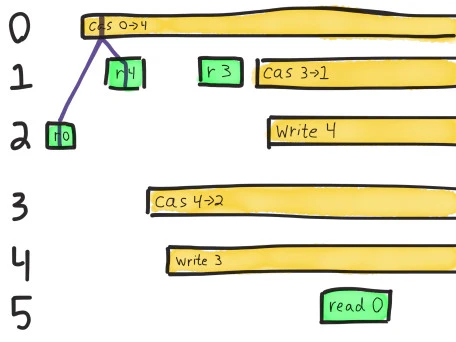

The next read we have to satisfy is process 1’s read of 4. The only operation that could have taken place between those two reads is process 0’s crashed CaS from 0 to 4, so we’ll use that as an intermediary.

Note that this path always moves forward in time: linearizability requires that operations take effect sometime between their invocation and their completion. We can choose any point along the operation’s bar for the op to take effect, but can’t travel through time by drawing a line that moves back to the left! Also note that along the purple line, our single-threaded model of a register holds: we read 0, change 0->4, then read 4.

Process 1 goes on to read a new value: 3. We have two operations which could take place before that read. Executing a compare-and-set from 4 to 2 would be legal because the current value is 4, but that would conflict with a subsequent read of 3. Instead we apply process 4’s write of 3 directly.

Now we must find a path leading from 3 to the final read of 0. We could write 4, and optionally CaS that 4 to 2, but neither of those values is consistent with a read of 0.

We could also CaS 3 to 1, which would give us the value 1, but that’s not 0 either! And applying the write of 4 and its dependent paths won’t get us anywhere either; we already showed those are inconsistent with reading 0. We need a write of 0, or a CaS to 0, for this history to make sense–and no such operation could possibly have happened here.

Each of these failed paths is represented by a different “possible world” in the Knossos analysis. At the end of the file, Knossos comes to the same conclusion we drew from the diagram: the register could have been 1, 2, 3, or 4–but not 0. This history is illegal.

It’s almost as if process 5’s final read of zero is connected to the state that process 2 saw, just prior to the network partition. As if the state of the system weren’t linear after all–a read was allowed to travel back in time to that earlier state. Or, alternatively, the state of the system split in two–one for each side of the network partition–writes occurring on one side, and on the other, the value remaining 0.

Dirty reads and stale reads

We know from this test that MongoDB does not offer linearizable CaS registers. But why?

One possibility is that this is the result of Mongo’s read-uncommitted isolation level. Although the docs say “For all inserts and updates, MongoDB modifies each document in isolation: clients never see documents in intermediate states,” they also warn:

MongoDB allows clients to read documents inserted or modified before it commits these modifications to disk, regardless of write concern level or journaling configuration…

For systems with multiple concurrent readers and writers, MongoDB will allow clients to read the results of a write operation before the write operation returns.

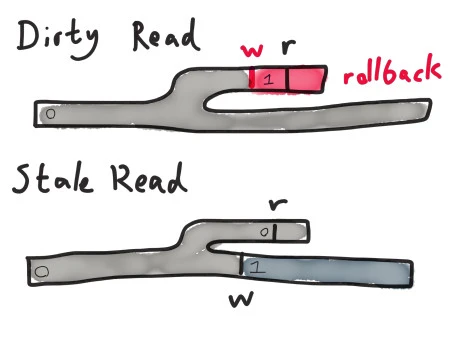

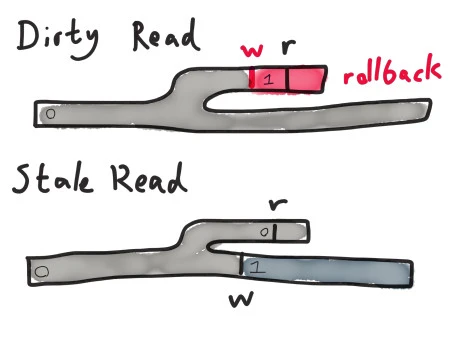

Which means that clients can read documents in intermediate states. Imagine the network partitions, and for a brief time, there are two primary nodes–each sharing the initial value 0. Only one of them, connected to a majority of nodes, can successfully execute writes with write concern Majority. The other, which can only see a minority, will eventually time out and step down–but this takes a few seconds.

If a client writes (or CaS’s) the value on the minority primary to 1, MongoDB will happily modify that primary’s local state before confirming with any secondaries that the write is safe to perform! It’ll be changed back to 0 as a part of the rollback once the partition heals–but until that time, reads against the minority primary will see 1, not 0! We call this anomaly a dirty read because it exposes garbage temporary data to the client.

Alternatively, no changes could occur on the minority primary–or whatever writes do take place leave the value unchanged. Meanwhile, on the majority primary, clients execute successful writes or CaS ops, changing the value to 1. If a client executes a read against the minority primary, it will see the old value 0, even though a write of 1 has taken place.

Because Mongo allows dirty reads from the minority primary, we know it must also allow clean but stale reads against the minority primary. Both of these anomalies are present in MongoDB.

Dirty reads are a known issue, but to my knowledge, nobody is aware that Mongo allows stale reads at the strongest consistency settings. Since Mongo’s documentation repeatedly states this anomaly should not occur, I’ve filed SERVER-17975.

What does that leave us with?

Even with Majority write concern and Primary read preference, reads can see old versions of documents. You can write a, then write b, then read a back. This invalidates a basic consistency invariant for registers: Read Your Writes.

Mongo also allows you to read alternating fresh and stale versions of a document–successive reads could see a, then b, then a again, and so on. This violates Monotonic Reads.

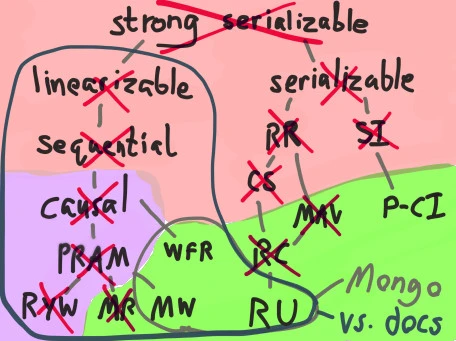

Because both of these consistency models are implied by the PRAM memory model, we know MongoDB cannot offer PRAM consistency–which in turn rules out causal, sequential, and linearizable consistency.

Because Mongo allows you to read uncommitted garbage data, we also know MongoDB can’t offer Read Committed, Cursor Stability, Monotonic Atomic View, Repeatable Read, or serializability. On the map of consistency models to the right, this leaves Writes Follow Reads, Monotonic Write, and Read Uncommitted–all of which, incidentally, are totally-available consistency models even when partitions occur.

Conversely, Mongo’s documentation repeatedly claims that one can read “the latest version of a document”, and that Mongo offers “immediate consistency”. These properties imply that reads, writes, and CaS against a single document should be linearizable. Linearizable consistency implies that Mongo should also ensure:

- Sequential consistency: all processes agree on op order

- Causal consistency: causally related operations occur in order

- PRAM: a parallel memory model

- Read Your Writes: a process can only read data from after its last write

- Monotonic Read: a process’s successive reads must occur in order

- Monotonic Write: a process’s successive writes must occur in order

- Write Follows Read: a process’s writes must logically follow its last read

Mongo 2.6.7 (and presumably 3.0.0, which has the same read-uncommitted semantics) only ensures the last two invariants. I argue that this is neither “strict” nor “immediate” consistency.

How bad are dirty reads?

Read uncommitted allows all kinds of terrible anomalies we probably don’t want as MongoDB users.

For instance, suppose we have a user registration service keyed by a unique username. Now imagine a partition occurs, and two users–Alice and Bob–try to claim the same username–one on each side of the partition. Alice’s request is routed to the majority primary, and she successfully registers the account. Bob, talking to the minority primary, will see his account creation request time out. The minority primary will eventually roll his account back to a nonexistent state, and when the partition heals, accept Alice’s version.

But until the minority primary detects the failure, Bob’s invalid user registration will still be visible for reads. After registration, the web server redirects Alice to /my/account to show her the freshly created account. However, this HTTP request winds up talking to a server whose client still thinks the minority primary is valid–and that primary happily responds to a read request for the account with Bob’s information.

Alice’s page loads, and in place of her own data, she sees Bob’s name, his home address, his photograph, and other things that never should have been leaked between users.

You can probably imagine other weird anomalies. Temporarily visible duplicate values for unique indexes. Locks that appear to be owned by two processes at once. Clients seeing purchase orders that never went through.

Or consider a reconciliation process that scans the list of all transactions a client has made to make sure their account balance is correct. It sees an attempted but invalid transaction that never took place, and happily sets the user’s balance to reflect that impossible transaction. The mischievous transaction subsequently disappears on rollback, leaving customer support to puzzle over why the numbers don’t add up.

Or, worse, an admin goes in to fix the rollback, assumes the invalid transaction should have taken place, and applies it to the new primary. The user sensibly retried their failed purchase, so they wind up paying twice for the same item. Their account balance goes negative. They get hit with an overdraft fine and have to spend hours untangling the problem with support.

Read-uncommitted is scary.

What if we just fix read-uncommitted?

Mongo’s engineers initially closed the stale-read ticket as a duplicate of SERVER-18022, which addresses dirty reads. However, as the diagram illustrates, only showing committed data on the minority primary will not solve the problem of stale reads. Even if the minority primary accepts no writes at all, successful writes against the majority node will allow us to read old values.

That invalidates Monotonic Read, Read Your Writes, PRAM, causal, sequential, and linearizable consistency. The anomalies are less terrifying than Read Uncommitted, but still weird.

For instance, let’s say two documents are always supposed to be updated one after the other–like creating a new comment document, and writing a reference to it into a user’s feed. Stale reads allow you to violate the implicit foreign key constraint there: the user could load their feed and see a reference to comment 123, but looking up comment 123 in Mongo returns Not Found.

A user could change their name from Charles to Désirée, have the change go through successfully, but when the page reloads, still see their old name. Seeing old values could cause users to repeat operations that they should have only attempted once–for instance, adding two or three copies of an item to their cart, or double-posting a comment. Your clients may be other programs, not people–and computers are notorious for retrying “failed” operations very fast.

Stale reads can also cause lost updates. For instance, a web server might accept a request to change a user’s profile photo information, write the new photo URL to the user record in Mongo, and contact the thumbnailer service to generate resized copies and publish them to S3. The thumbnailer sees the old photo, not the newly written one. It happily resizes it, and uploads the old avatar to S3. As far as the backend and thumbnailer are concerned, everything went just fine–but the user’s photo never changes. We’ve lost their update.

What if we just pretend this is how it’s supposed to work?

Mongo then closed the ticket, claiming it was working as designed.

What are databases? We just don’t know.

So where does that leave us?

Despite claiming “immediate” and “strict consistency”, with reads that see “the most recent write operations”, and “the latest version”, MongoDB, even at the strongest consistency levels, allows reads to see old values of documents or even values that never should have been written. Even when Mongo supports Read Committed isolation, the stale read problem will likely persist without a fundamental redesign of the read process.

SERVER-18022 targets consistent reads for Mongo 3.1. SERVER-17975 has been re-opened, and I hope Mongo’s engineers will consider the problem and find a way to address it. Preventing stale reads requires coupling the read process to the oplog replication state machine in some way, which will probably be subtle–but Consul and etcd managed to do it in a few months, so the problem’s not insurmountable!

In the meantime, if your application requires linearizable reads, I can suggest a workaround! CaS operations appear (insofar as I’ve been able to test) to be linearizable, so you can perform a read, then try to findAndModify the current value, changing some irrelevant field. If that CaS succeeds, you know the document had that state at some time between the invocation of the read and the completion of the CaS operation. You will, however, incur an IO and latency penalty for the extra round-trips.

And remember: always use the Majority write concern, unless your data is structured as a CRDT! If you don’t, you’re looking at lost updates, and that’s way worse than any of the anomalies we’ve discussed here!

Finally, read the documentation for the systems you depend on thoroughly–then verify their claims for yourself. You may discover surprising results!

Next, on Jepsen: Elasticsearch 1.5.0

My thanks to Stripe and Marc Hedlund for giving me the opportunity to perform this research, and a special thanks to Peter Bailis, Michael Handler, Coda Hale, Leif Walsh, and Dan McKinley for their comments on early drafts. Asya Kamsky pointed out that Mongo will optimize away identity CaS operations, which prevents their use as a workaround.

Yikes!

As a Stripe customer, I sure hope you’re not using Mongo to keep track of my transactions.